A quantum computer is a device for computation that makes direct use of quantum mechanical phenomena, such as superposition and entanglement, to perform operations on data. Quantum computers are different from traditional computers based on transistors. The basic principle behind quantum computation is that quantum properties can be used to represent data and perform operations on these data.[1] A theoretical model is the quantum Turing machine, also known as the universal quantum computer. Although quantum computing is still in its infancy, experiments have been carried out in which quantum computational operations were executed on a very small number of qubits (quantum bit). Both practical and theoretical research continues, and many national government and military funding agencies support quantum computing research to develop quantum computers for both civilian and national security purposes, such as cryptanalysis.[2] If large-scale quantum computers can be built, they will be able to solve certain problems much faster than any current classical computers (for example Shor's algorithm). Quantum computers don't allow the computations of functions that are not theoretically computable by classical computers, i.e. they do not alter the Church–Turing thesis. The gain is only in efficiency.

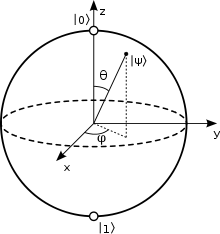

The Bloch sphere is a representation of a qubit, the fundamental building block of quantum computers (*).

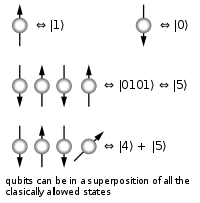

A classical computer has a memory made up of bits, where each bit represents either a one or a zero. A quantum computer maintains a sequence of qubits. A single qubit can represent a one, a zero, or, crucially, any quantum superposition of these; moreover, a pair of qubits can be in any quantum superposition of 4 states, and three qubits in any superposition of 8. In general a quantum computer with n qubits can be in an arbitrary superposition of up to 2n different states simultaneously (this compares to a normal computer that can only be in one of these 2n states at any one time). A quantum computer operates by manipulating those qubits with a fixed sequence of quantum logic gates. The sequence of gates to be applied is called a quantum algorithm. An example of an implementation of qubits for a quantum computer could start with the use of particles with two spin states: "down" and "up" (typically written Bits vs. qubits

Qubits are made up of controlled particles and the means of control (e.g. devices that trap particles and switch them from one state to another).[3] (*) Consider first a classical computer that operates on a three-bit register. The state of the computer at any time is a probability distribution over the 23 = 8 different three-bit strings 000, 001, 010, 011, 100, 101, 110, 111. If it is a deterministic computer, then it is in exactly one of these states with probability 1. However, if it is a probabilistic computer, then there is a possibility of it being in any one of a number of different states. We can describe this probabilistic state by eight nonnegative numbers a,b,c,d,e,f,g,h (where a = probability computer is in state 000, b = probability computer is in state 001, etc.). There is a restriction that these probabilities sum to 1. The state of a three-qubit quantum computer is similarly described by an eight-dimensional vector (a,b,c,d,e,f,g,h), called a ket. However, instead of adding to one, the sum of the squares of the coefficient magnitudes, | a | 2 + | b | 2 + ... + | h | 2, must equal one. Moreover, the coefficients are complex numbers. Since states are represented by complex wavefunctions, two states being added together will undergo interference. This is a key difference between quantum computing and probabilistic classical computing.[4] If you measure the three qubits, then you will observe a three-bit string. The probability of measuring a string will equal the squared magnitude of that string's coefficients (using our example, probability that we read state as 000 = | a | 2, probability that we read state as 001 = | b | 2, etc..). Thus a measurement of the quantum state with coefficients (a,b,...,h) gives the classical probability distribution ( | a | 2, | b | 2,..., | h | 2). We say that the quantum state "collapses" to a classical state. Note that an eight-dimensional vector can be specified in many different ways, depending on what basis you choose for the space. The basis of three-bit strings 000, 001, ..., 111 is known as the computational basis, and is often convenient, but other bases of unit-length, orthogonal vectors can also be used. Ket notation is often used to make explicit the choice of basis. For example, the state (a,b,c,d,e,f,g,h) in the computational basis can be written as The computational basis for a single qubit (two dimensions) is Note that although recording a classical state of n bits, a 2n-dimensional probability distribution, requires an exponential number of real numbers, practically we can always think of the system as being exactly one of the n-bit strings—we just don't know which one. Quantum mechanically, this is not the case, and all 2n complex coefficients need to be kept track of to see how the quantum system evolves. For example, a 300-qubit quantum computer has a state described by 2300 (approximately 1090) complex numbers, more than the number of atoms in the observable universe. Operation While a classical three-bit state and a quantum three-qubit state are both eight-dimensional vectors, they are manipulated quite differently for classical or quantum computation. For computing in either case, the system must be initialized, for example into the all-zeros string, |000\rangle, corresponding to the vector (1,0,0,0,0,0,0,0). In classical randomized computation, the system evolves according to the application of stochastic matrices, which preserve that the probabilities add up to one (i.e., preserve the L1 norm). In quantum computation, on the other hand, allowed operations are unitary matrices, which are effectively rotations (they preserve that the sum of the squares add up to one, the Euclidean or L2 norm). (Exactly what unitaries can be applied depend on the physics of the quantum device.) Consequently, since rotations can be undone by rotating backward, quantum computations are reversible. (Technically, quantum operations can be probabilistic combinations of unitaries, so quantum computation really does generalize classical computation. See quantum circuit for a more precise formulation.) Finally, upon termination of the algorithm, the result needs to be read off. In the case of a classical computer, we sample from the probability distribution on the three-bit register to obtain one definite three-bit string, say 000. Quantum mechanically, we measure the three-qubit state, which is equivalent to collapsing the quantum state down to a classical distribution (with the coefficients in the classical state being the squared magnitudes of the coefficients for the quantum state, as described above) followed by sampling from that distribution. Note that this destroys the original quantum state. Many algorithms will only give the correct answer with a certain probability, however by repeatedly initializing, running and measuring the quantum computer, the probability of getting the correct answer can be increased. For more details on the sequences of operations used for various quantum algorithms, see universal quantum computer, Shor's algorithm, Grover's algorithm, Deutsch-Jozsa algorithm, amplitude amplification, quantum Fourier transform, quantum gate, quantum adiabatic algorithm and quantum error correction. Potential Integer factorization is believed to be computationally infeasible with an ordinary computer for large integers if they are the product of few prime numbers (e.g., products of two 300-digit primes).[5] By comparison, a quantum computer could efficiently solve this problem using Shor's algorithm to find its factors. This ability would allow a quantum computer to decrypt many of the cryptographic systems in use today, in the sense that there would be a polynomial time (in the number of digits of the integer) algorithm for solving the problem. In particular, most of the popular public key ciphers are based on the difficulty of factoring integers (or the related discrete logarithm problem which can also be solved by Shor's algorithm), including forms of RSA. These are used to protect secure Web pages, encrypted email, and many other types of data. Breaking these would have significant ramifications for electronic privacy and security. However, other existing cryptographic algorithms don't appear to be broken by these algorithms.[6][7] Some public-key algorithms are based on problems other than the integer factorization and discrete logarithm problems to which Shor's algorithm applies, like the McEliece cryptosystem based on a problem in coding theory.[6][8] Lattice based cryptosystems are also not known to be broken by quantum computers, and finding a polynomial time algorithm for solving the dihedral hidden subgroup problem, which would break many lattice based cryptosystems, is a well-studied open problem.[9] It has been proven that applying Grover's algorithm to break a symmetric (secret key) algorithm by brute force requires roughly 2n/2 invocations of the underlying cryptographic algorithm, compared with roughly 2n in the classical case,[10] meaning that symmetric key lengths are effectively halved: AES-256 would have the same security against an attack using Grover's algorithm that AES-128 has against classical brute-force search (see Key size). Quantum cryptography could potentially fulfill some of the functions of public key cryptography. Besides factorization and discrete logarithms, quantum algorithms offering a more than polynomial speedup over the best known classical algorithm have been found for several problems,[11] including the simulation of quantum physical processes from chemistry and solid state physics, the approximation of Jones polynomials, and solving Pell's equation. No mathematical proof has been found that shows that an equally fast classical algorithm cannot be discovered, although this is considered unlikely. For some problems, quantum computers offer a polynomial speedup. The most well-known example of this is quantum database search, which can be solved by Grover's algorithm using quadratically fewer queries to the database than are required by classical algorithms. In this case the advantage is provable. Several other examples of provable quantum speedups for query problems have subsequently been discovered, such as for finding collisions in two-to-one functions and evaluating NAND trees. Consider a problem that has these four properties: 1. The only way to solve it is to guess answers repeatedly and check them, An example of this is a password cracker that attempts to guess the password for an encrypted file (assuming that the password has a maximum possible length). For problems with all four properties, the time for a quantum computer to solve this will be proportional to the square root of n. That can be a very large speedup, reducing some problems from years to seconds. It can be used to attack symmetric ciphers such as Triple DES and AES by attempting to guess the secret key. Grover's algorithm can also be used to obtain a quadratic speed-up [over a brute-force search] for a class of problems known as NP-complete. Since chemistry and nanotechnology rely on understanding quantum systems, and such systems are impossible to simulate in an efficient manner classically, many believe quantum simulation will be one of the most important applications of quantum computing.[12] There are a number of practical difficulties in building a quantum computer, and thus far quantum computers have only solved trivial problems. David DiVincenzo, of IBM, listed the following requirements for a practical quantum computer:[13] * scalable physically to increase the number of qubits;

One of the greatest challenges is controlling or removing quantum decoherence. This usually means isolating the system from its environment as the slightest interaction with the external world would cause the system to decohere. This effect is irreversible, as it is non-unitary, and is usually something that should be highly controlled, if not avoided. Decoherence times for candidate systems, in particular the transverse relaxation time T2 (for NMR and MRI technology, also called the dephasing time), typically range between nanoseconds and seconds at low temperature.[4] These issues are more difficult for optical approaches as the timescales are orders of magnitude lower and an often cited approach to overcoming them is optical pulse shaping. Error rates are typically proportional to the ratio of operating time to decoherence time, hence any operation must be completed much more quickly than the decoherence time. If the error rate is small enough, it is thought to be possible to use quantum error correction, which corrects errors due to decoherence, thereby allowing the total calculation time to be longer than the decoherence time. An often cited figure for required error rate in each gate is 10−4. This implies that each gate must be able to perform its task in one 10,000th of the decoherence time of the system. Meeting this scalability condition is possible for a wide range of systems. However, the use of error correction brings with it the cost of a greatly increased number of required qubits. The number required to factor integers using Shor's algorithm is still polynomial, and thought to be between L and L2, where L is the number of bits in the number to be factored; error correction algorithms would inflate this figure by an additional factor of L. For a 1000-bit number, this implies a need for about 104 qubits without error correction.[14] With error correction, the figure would rise to about 107 qubits. Note that computation time is about L2 or about 107 steps and on 1 MHz, about 10 seconds. A very different approach to the stability-decoherence problem is to create a topological quantum computer with anyons, quasi-particles used as threads and relying on braid theory to form stable logic gates.[15][16] Developments There are a number of quantum computing candidates, among those: * Superconductor-based quantum computers (including SQUID-based quantum computers)[17] The large number of candidates demonstrates that the topic, in spite of rapid progress, is still in its infancy. But at the same time there is also a vast amount of flexibility. In 2005, researchers at the University of Michigan built a semiconductor chip which functioned as an ion trap. Such devices, produced by standard lithography techniques, may point the way to scalable quantum computing tools.[26] An improved version was made in 2006.[citation needed] In 2009, researchers at Yale University created the first rudimentary solid-state quantum processor. The two-qubit superconducting chip was able to run elementary algorithms. Each of the two artificial atoms (or qubits) were made up of a billion aluminum atoms but they acted like a single one that could occupy two different energy states.[27][28] Another team, working at the University of Bristol, also created a silicon-based quantum computing chip, based on quantum optics. The team was able to run Shor's algorithm on the chip.[29] Relation to computational complexity theory

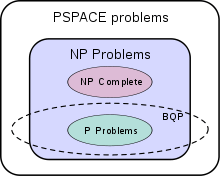

The suspected relationship of BQP to other problem spaces.[30] The class of problems that can be efficiently solved by quantum computers is called BQP, for "bounded error, quantum, polynomial time". Quantum computers only run probabilistic algorithms, so BQP on quantum computers is the counterpart of BPP on classical computers. It is defined as the set of problems solvable with a polynomial-time algorithm, whose probability of error is bounded away from one half.[30] A quantum computer is said to "solve" a problem if, for every instance, its answer will be right with high probability. If that solution runs in polynomial time, then that problem is in BQP. BQP is contained in the complexity class #P (or more precisely in the associated class of decision problems P#P),[31] which is a subclass of PSPACE. BQP is suspected to be disjoint from NP-complete and a strict superset of P, but that is not known. Both integer factorization and discrete log are in BQP. Both of these problems are NP problems suspected to be outside BPP, and hence outside P. Both are suspected to not be NP-complete. There is a common misconception that quantum computers can solve NP-complete problems in polynomial time. That is not known to be true, and is generally suspected to be false.[31] Although quantum computers may be faster than classical computers, those described above can't solve any problems that classical computers can't solve, given enough time and memory (however, those amounts might be practically infeasible). A Turing machine can simulate these quantum computers, so such a quantum computer could never solve an undecidable problem like the halting problem. The existence of "standard" quantum computers does not disprove the Church–Turing thesis.[30][page needed] It has been speculated that theories of quantum gravity, such as M-theory or loop quantum gravity, may allow even faster computers to be built. Currently, it's an open problem to even define computation in such theories due to the problem of time, i.e. there's no obvious way to describe what it means for an observer to submit input to a computer and later receive output.[32] See also

1. ^ "Quantum Computing with Molecules" article in Scientific American by Neil Gershenfeld and Isaac L. Chuang - a generally accessible overview of quantum computing and so on.

* Derek Abbott, Charles R. Doering, Carlton M. Caves, Daniel M. Lidar, Howard E. Brandt, Alexander R. Hamilton, David K. Ferry, Julio Gea-Banacloche, Sergey M. Bezrukov, and Laszlo B. Kish (2003). "Dreams versus Reality: Plenary Debate Session on Quantum Computing". Quantum Information Processing 2 (6): 449–472. doi:10.1023/B:QINP.0000042203.24782.9a. arXiv:quant-ph/0310130. (Alternative Location (free) at Michigan university's repository Deep Blue) * Joachim Stolze,; Dieter Suter, (2004). Quantum Computing. Wiley-VCH. ISBN 3527404384. * Ian Mitchell, (1998). "Computing Power into the 21st Century: Moore's Law and Beyond". http://citeseer.ist.psu.edu/mitchell98computing.html. * Rolf Landauer, (1961). "Irreversibility and heat generation in the computing process". http://www.research.ibm.com/journal/rd/053/ibmrd0503C.pdf. * Gordon E. Moore (1965). Cramming more components onto integrated circuits. * R.W. Keyes, (1988). Miniaturization of electronics and its limits. * M. A. Nielsen,; E. Knill, ; R. Laflamme,. "Complete Quantum Teleportation By Nuclear Magnetic Resonance". http://citeseer.ist.psu.edu/595490.html. * Lieven M.K. Vandersypen,; Constantino S. Yannoni, ; Isaac L. Chuang, (2000). Liquid state NMR Quantum Computing. * Imai Hiroshi,; Hayashi Masahito, (2006). Quantum Computation and Information. Berlin: Springer. ISBN 3540331328. * Andre Berthiaume, (1997). "Quantum Computation". http://citeseer.ist.psu.edu/article/berthiaume97quantum.html. * Daniel R. Simon, (1994). "On the Power of Quantum Computation". Institute of Electrical and Electronic Engineers Computer Society Press. http://citeseer.ist.psu.edu/simon94power.html. * "Seminar Post Quantum Cryptology". Chair for communication security at the Ruhr-University Bochum. http://www.crypto.rub.de/its_seminar_ss08.html. * Laura Sanders, (2009). "First programmable quantum computer created". http://www.sciencenews.org/view/generic/id/49951/title/First_programmable_quantum_computer_created.

* Stanford Encyclopedia of Philosophy: "Quantum Computing" by Amit Hagar.

Retrieved from "http://en.wikipedia.org/"

|

|

||||||||||||